ASTM E74 and Accuracy Statements: Why an Accuracy Statement Does Not Belong on Every Calibration Certificate - Intro

The current ASTM E74-18 standard is the title Standard Practice for Calibration and Verification for Force-Measuring Instruments. Here at Morehouse, we support the best practices that are outlined in the ASTM E74 standard as the representation of the expected performance of a load cell or other force-measuring instrument. What may be a bit of an industry disconnect is that some companies receive a full ASTM E74 calibration report only to ignore a significant portion of the report. The confusion comes when someone is used to entering an accuracy on the receiving report for the force-measuring instrument, and there is not one to be found on the ASTM E74 calibration certificate.

When reporting measurement error, we have observed numerous users taking the liberty of standing behind common misconceptions that a measurement is as accurate from which it came, or they adopt a fallback position of saying the calibration of the force-measuring instrument needs to be four times more accurate than the force-measuring instrument being calibrated. When these types of questions are raised, we typically observe best practices falling short of the actual intent of the ASTM E74 standard.

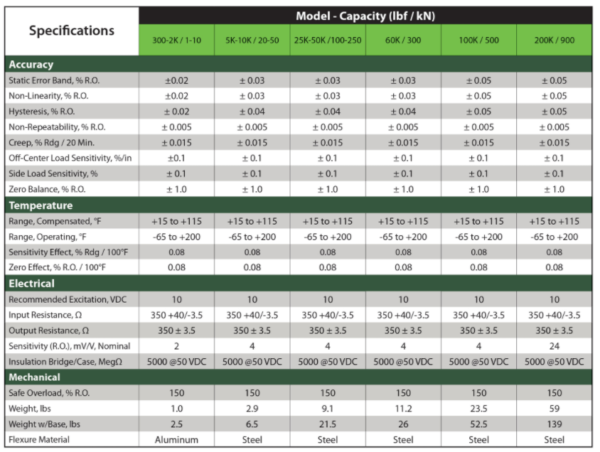

A key indication of best practices not being followed is when someone asks about an accuracy statement on the report or they do not find one and go back to the instrument's specification sheet. The specification sheet is kind of useless when relating to ASTM E74 calibration. It will be useful in figuring out uncertainty contributors such as environmental conditions relating to operating at various temperatures; it helps evaluate errors that may be due to misalignment or how well the device may return to a zero condition.

The specification sheet is also useful in evaluating how good the force-measuring instrument may be. Specifically, things like Non-repeatability are often how well the force-measuring instrument may repeat without being placed under different conditions. The major flaw is the specification sheet does not provide the end-user with a lot of what they need. It does not tell the user the actual expected performance of the device. A force standard such ASTM E74 standard excels at providing the end-user with meaningful data. The ASTM E74 standard tests the reproducibility characteristics of the force-measuring device.

The standard provides guidance on how to perform these tests, such as randomizing force application conditions. This randomization, which is as simple as rotating and repositioning the instrument, often yields the actual expected performance of the load cell or other force-measuring instrument.

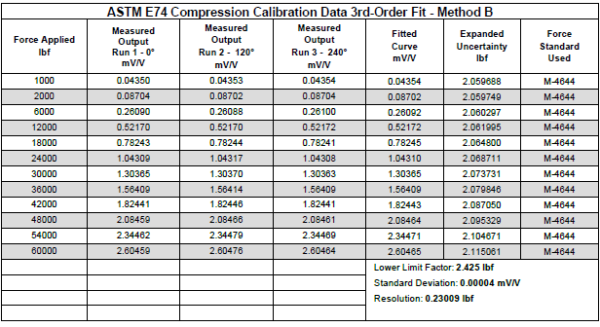

The expected performance from the ASTM E74 calibration is determined by performing a series of measurements and calculations per the standard. A standard deviation is calculated using the difference between the individual values observed in the calibration and the corresponding values taken from a regression-type equation. The standard deviation is then multiplied by a coverage factor of 2.4 to determine the LLF. This term is dubbed Lower Limit Factor – LLF. The LLF is then used to calculate the verified range of forces. This is where certain Marketing specifications can assign accuracy.

A good example is in the Marketing materials for Morehouse load cells. On our Ultra-Precision Load Cells, we specify the load cells are accurate to 0.005 % of full scale. What we are saying is that the ASTM LLF, which is the expected performance of the load cell, is better than 0.005 % of full scale, however, this is only one component to the much larger Calibration and Measurement Capability Uncertainty Parameter (Sometimes referred to as CMC). That is that under the same conditions that Morehouse used for calibration, the device is expected to perform better than 0.005 % of full scale. On a 10,000 lbf load cell, the expected performance should be better than 0.5 lbf (10,000 * 0.005 %).

So, we are saying that at the time of calibration, the load cell’s expected performance will be better than 0.005 % or 50 parts per million. If we continue to follow the ASTM E74 standard, the calculated LLF is then used to determine the usable range for the device. If you are one of those companies not using the load cell for ASTM E74, E18, E10, E4, or other standards referencing ASTM E74, this verified range of forces may not hold much value. Thus, if you are not continuing to follow other ASTM standards or a standard referencing ASTM E74, you do not have to read the next paragraph.

Class A and AA Verified Range of Forces

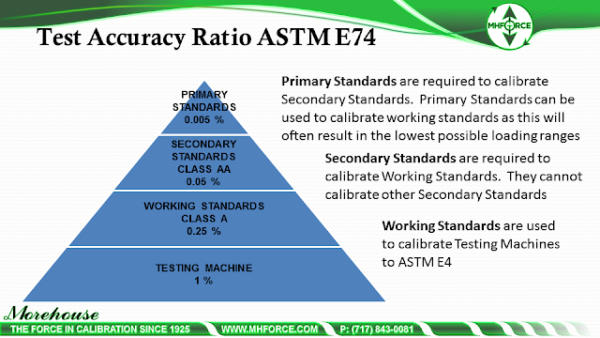

If you are using the load cell to perform other calibrations in accordance with one of these standards, the Class A or AA verified range of forces uses the LLF and multiplies the number by either 400 or 2000. The standard uses the LLF or resolution of the force-measuring device, whichever is higher, to determine the verified range of forces. If the force-measuring instrument is going to be used to calibrate other force-measuring instruments per ASTM E74, then a Class AA verified range of forces is a requirement. This takes whichever is higher between the resolution or LLF and multiplies the number by 2000.

Essentially it ensures the first test point as defined by 2000 times the resolution or LLF is at least five times more accurate than what is being tested. This assumes the device being tested needs to be 0.25 %. It is important to note that the only way to assign a Class AA verified range of a force is to calibrate the device with deadweight primary standards known to be better than 0.005 % of applied force. A load cell cannot be used to assign a Class AA verified range of force ever! If the end goal is to calibrate a testing machine to ASTM E4, then the higher of the Resolution or LLF would be multiplied by 400. This assigns a Class A verified range of force in which the first point in the verified range of forces is exactly four times more accurate.

Let's Look at the Math

10,000 lbf Morehouse load cell with an LLF of 0.5 lbf. We multiply the 0.5 lbf by 2000 and get 1000 lbf. 0.5 lbf divided by 1000 lbf = 0.05 %. Most applications here relate to calibrating other instruments and assigning a Class A verified range of forces. The specification is for Class A is 0.25 %. 0.05 % is five times more accurate than the first point, as determined by the Class AA verified range of forces.

10,000 lbf Morehouse load cell with an LLF of 0.5 lbf. We multiply the 0.5 lbf by 400 and get 200 lbf. 0.5 lbf divided by 100 lbf = 0.55 %. Most applications here relate to calibrating testing machines with the allowable Class A verified range of forces. The specification is for most testing machines is 1 %, which means our load cell is four times more accurate than what is being calibrated at the first point in the usable verified range of forces.

Accuracy Specification – How Accurate is that Accuracy Statement

If we look at the numbers and how they relate to everyday use, we need to understand how to derive precisely what is required from the data. Is it fair to assume the ASTM LLF can easily be substituted for an accuracy number? Not really. The ASTM E74 standard is built on a pyramid where if everyone follows the standard, it works.

The main issue is that ISO/IEC 17025:2017 and other standards are not contained enough to further propagate measurement uncertainty. Even if the end-user is fully following all the guidelines outlined in ASTM E74, they still need to calculate measurement uncertainty correctly to comply with ISO/IEC 17025. If they do not follow all of the guidelines, they will omit uncertainty contributors that make an instrument look so much better than it is.

In these cases, some manufacturers take shortcuts and proceed with a game of specsmanship. They may omit major error sources like reproducibility and resolution and base specifications on averages and not good metrological practices. Luckily, Morehouse has drafted a guidance document on this topic. The document was adopted by A2LA and can be found @ G126 - Guidance on Uncertainty Budgets for Force Measuring Devices. If someone is calculating TUR to make a statement of conformity, they need to follow the proper guidelines for measurement uncertainty as well as calculate TUR correctly.

TUR and Accredited Calibrations

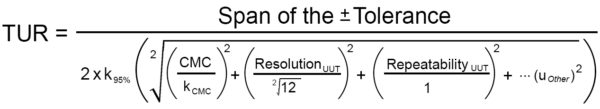

If you are accredited and need to make a statement of conformity, you need to follow the ISO/IEC 17025:2017 standard which, involves discussing what decision rule is to be followed. In any case, a TUR (Test Uncertainty Ratio) is going to be calculated, and this ratio will involve a numerator and a denominator. Per ANSI/NCSL Z540.3 Handbook “For the numerator, the tolerance used in the calibration procedure should be used in the calculation of the TUR. This tolerance is to reflect the organization’s performance requirements for the M&TE, which are, in turn, derived from the intended application of the M&TE.

In most cases, these performance requirements are those described by the Manufacturer’s tolerances and specifications for the M&TE and are therefore included in the numerator.” In most cases, we can simplify the numerator to UUT Tolerance. The denominator is a bit more complicated as ANSI/NCSL Z540.3 Handbook states, “For the denominator, the 95 % expanded uncertainty of the measurement process used for calibration following the calibration procedure is to be used to calculate TUR. The value of this uncertainty estimate should reflect the results that are reasonably expected from the use of the approved procedure to calibrate the particular type of M&TE. Therefore, the estimate includes all components of error that have an influence on the measurement results of the calibration, which would also include the influences of the item being calibrated with the exception of the bias of the M&TE.

The calibration process error, therefore, includes temporary and non-correctable influences incurred during the calibration such as repeatability, resolution, error in the measurement source, operator error, error in correction factors, environmental influences, etc.” This definition of the denominator agrees very closely with ILAC P-14 in section 6.4 states, “Contributions to the uncertainty stated on the calibration certificate shall include relevant short-term contributions during calibration and contributions that can reasonably be attributed to the customer’s device.

Where applicable, the uncertainty shall cover the same contributions to the uncertainty that were included in the evaluation of the CMC uncertainty component, except that uncertainty components evaluated for the best existing device shall be replaced with those of the customer’s device.”

The above figure shows the ratio of UUT Tolerance, which is often requested to be the Manufacturer’s specification compared to the expanded uncertainty of the calibration process. At a minimum, this should include the uncertainty of the measurement process (labeled as CMC though it is the CMC Uncertainty Component) as well as the resolution of the UUT. There are some instances where the repeatability of the UUT is substituted with that of the best existing device used for calibration, as referenced in ILAC P-14. Not accounting for the resolution of the UUT can result in increased risk to the end-user unless the same resolution is the same as what was considered for in the CMC uncertainty component.

Expected Performance – What Does a Single Run of Data Actually Tell Us?

The ASTM calibration procedure, when followed properly, will give the end-user an excellent starting point. This is much better than those 5-pt. Commercial calibrations are often used to verify non-linearity and possible hysteresis. This 5-pt. Calibrations do not even remotely start to address how fit for use the force-measuring instrument may be.

At best, they say this device can make a measurement and not deviate from the line. However, they miss the reproducibility aspect of the measurement entirely. These types of calibrations typically only relate to letting the end-user know if their force-measuring instrument is functional per minimal requirements. They truly do not let the end-user know anything about the expected performance of the force-measuring instrument.

The expected performance, as determined by ASTM E74, is a complete method to ensure confidence. However, the end-user must know that the calibration often represents how the force-measuring instrument will function in laboratory-like situations. These are situations where the force machines are often plumb, level, square, rigid, and have low torsion.

The end-user must use the proper force transfer machines as well as use the same adapters during calibration that were used during calibration. Differing the conditions from how the force-measuring device was calibrated is like “de-rating” a device. The end-user uses the force-measuring device at a different temperature; the results will vary. They will vary by about 0.0015 % per degree Celsius. If they do use an integral adapter and vary the thread depth into the load cell, the results could vary by 0.1 % or more. We have written several papers and blogs regarding the importance of adapters. Our award-winning article on adapters can be found here.

Note: Not using the proper adapters to calibrate load cells, truck & aircraft scales, tension links, dynamometers, and other force-measuring devices can produce significant measurement errors and pose serious safety concerns.

ASTM E74 and Accuracy Statements: Why an Accuracy Statement Does Not Belong on Every Calibration Certificate - Conclusion

I hope this article has helped in determining that the specification sheet is not the only thing one should consider in accessing the actual performance characteristics of a force-measuring instrument and that there is much more that needs to be done than what is on the specification sheet.

Interestingly, if we just looked at most specification sheets, we would never find the specification on how good this force-measuring instrument is. We need to conduct actual testing for that number, and the testing should not be a 5-pt—commercial calibration or anything similar. We should pay careful attention to our loading conditions, instrumentation, and the adapters we use when we use the force-measuring instrument.

That testing should be calibration to a legal document that determines the reproducibility condition of the measurement; A standard like the ASTM E74 standard or something similar that is a legally binding standard where the best in the industry continue to evolve the standard. For force measurement, the two most common are ASTM E74 and ISO 376. We have an article on these that can be found here.

Following the guidelines in A2LA's G126 is the right way to do budgets. Blindly accepting a manufacturer's accuracy specification will most likely lead to underestimating the uncertainty of measurement, which will result in higher risk, and maybe catastrophic failures.

I take great pride in our knowledgeable team at Morehouse, who will work with you to find the right solution. We have now been in business for over a century and have a focus on being the most recognized name in the force business. That vision comes from educating our customers on what matters most and having the right discussions. Morehouse will not commit to providing a system if we cannot meet your expectations.

If you enjoyed this article, check out our LinkedIn and YouTube channel for more helpful posts and videos.

Everything we do, we believe in changing how people think about force and torque calibration. We challenge the "just calibrate it" mentality by educating our customers on what matters and what causes significant errors, and focus on reducing them.

Morehouse makes simple-to-use calibration products. We build awesome force equipment that is plumb, level, square, and rigid, as well as provide unparalleled calibration service with less than two-week lead times.

Contact us at 717-843-0081 to speak to a live person or email info@mhforce.com for more information.

#Accuracy Statement # Calibration Certificate